Comet

Platform for tracking, visualizing, and managing machine learning experiments.

The platform provides a unified interface for logging, visualizing, and comparing machine‑learning experiment runs. It captures detailed trace data from model training and inference, allowing users to examine each step of an agent’s execution, from context retrieval to tool selection and feedback scoring. Built‑in test suites let developers define assertions in plain English, automatically evaluating traces and reporting pass/fail outcomes.

In addition to observability, the system offers automated remediation through a coding agent that analyzes test results, suggests fixes, and writes code changes back to the repository with version‑control integration. A sandboxed playground enables safe experimentation with modified agents, supporting stakeholder review and deployment of selected versions.

The service also extends to production monitoring, tracking model costs, governance metrics, and performance consistency at scale. It is positioned for data scientists, machine‑learning engineers, and AI developers who need to track, debug, and iterate on model training and deployment pipelines.

Reviews

Loading reviews…

Similar apps

AI Coding Agents

Opik

Evaluate, test, and ship LLM applications with a suite of observability tools to calibrate language model outputs across your dev and…

AI Coding Agents

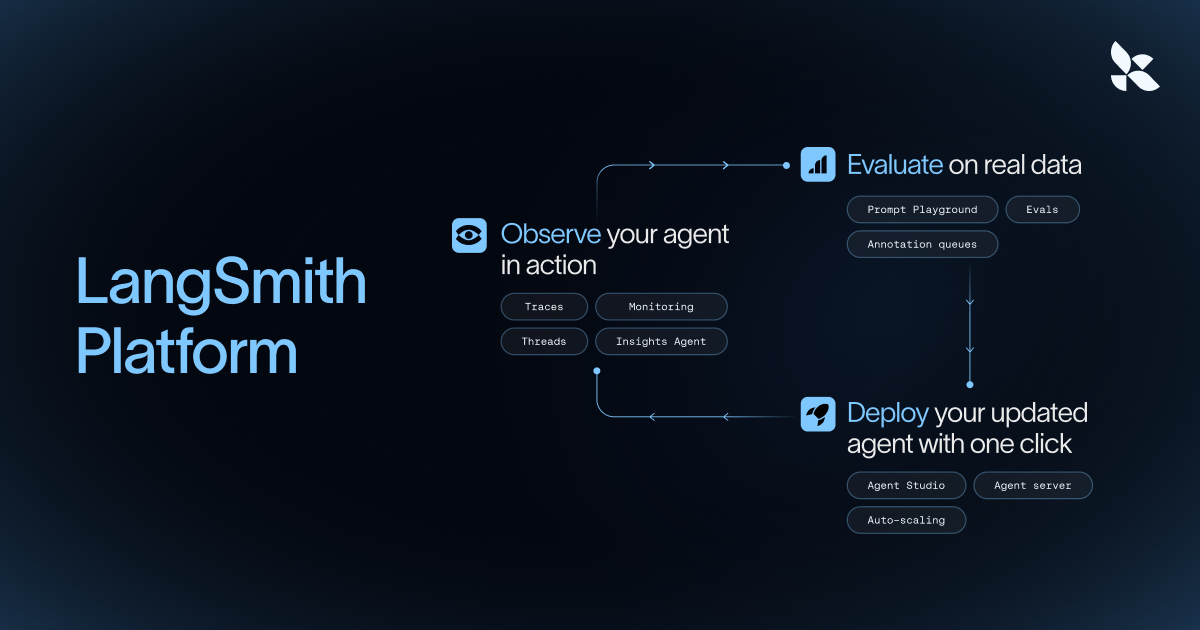

Langsmith

Observability platform for LLM applications, tracking prompts, latency, and costs.

Network & Connectivity

Open Comet

The autonomous AI browser agent for deep research & tasks

AI Coding Agents

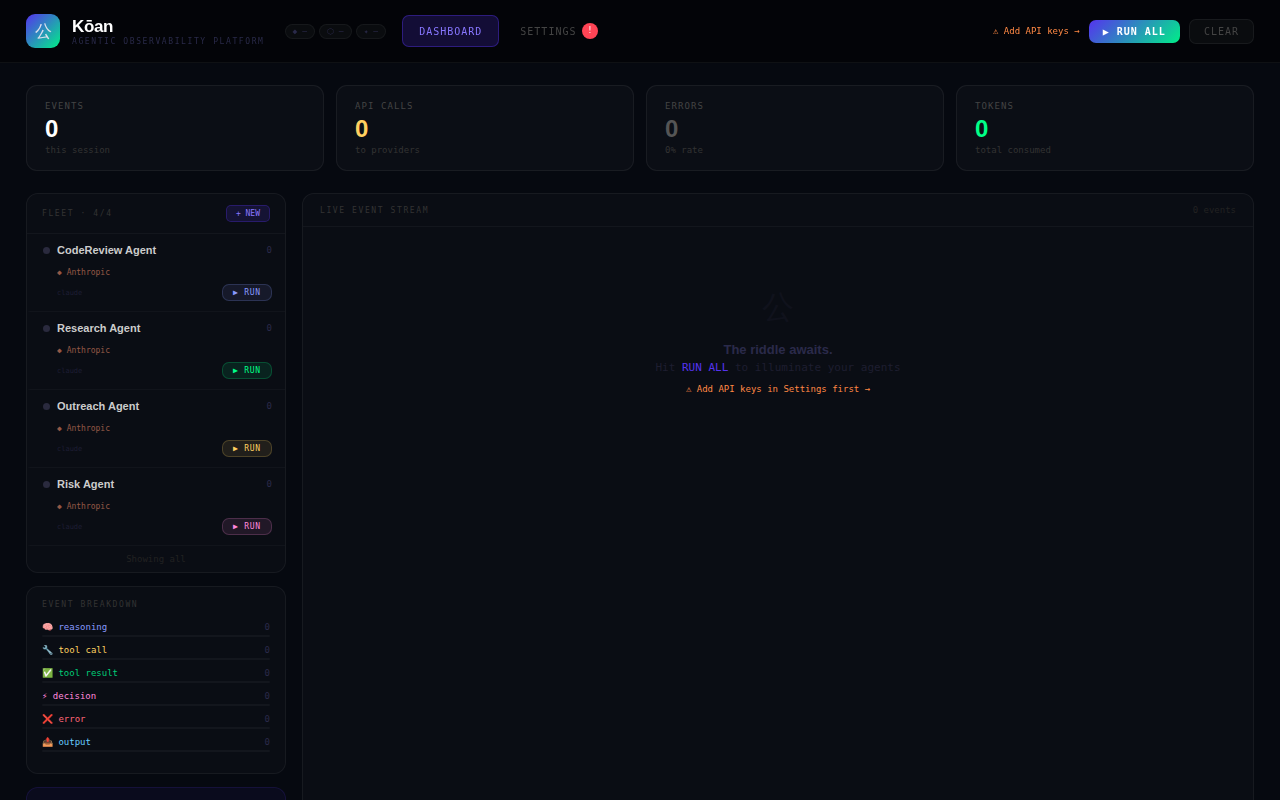

Kōan

See your AI agents think. Reasoning, tool calls & decisions

DevOps & Infrastructure

Coralogix

Cloud-based platform for log analytics, monitoring, and observability of applications.

AI Coding Agents

Langfuse

LLM engineering platform for model tracing, prompt management, and application evaluation. Langfuse helps teams collaboratively debug…