Defend

Open-source guardrails for LLM apps

Defend provides a set of open‑source guardrails designed to help developers build safer language‑model applications. It offers mechanisms for enforcing policy constraints, filtering unsafe outputs, and monitoring usage patterns, allowing teams to integrate protective checks directly into their LLM pipelines. The library is intended for developers who need to add compliance and risk‑mitigation layers to conversational agents, text generators, or any system that leverages large language models.

Implemented as a collection of reusable components, Defend can be incorporated into existing codebases with minimal friction. Its experimental status indicates that the project is still evolving, and contributions from the community are encouraged to refine its features and expand its coverage of safety scenarios.

Reviews

Loading reviews…

Similar apps

Security & Identity

QuiGuard

Selfhosted proxy; scrubs secrets from AI Agent tool calls.

Security & Identity

Vigil AI

Autonomous API threat detection with explainable AI decision

Security & Identity

Privent

See Your AI Data Exposure

Password & Security

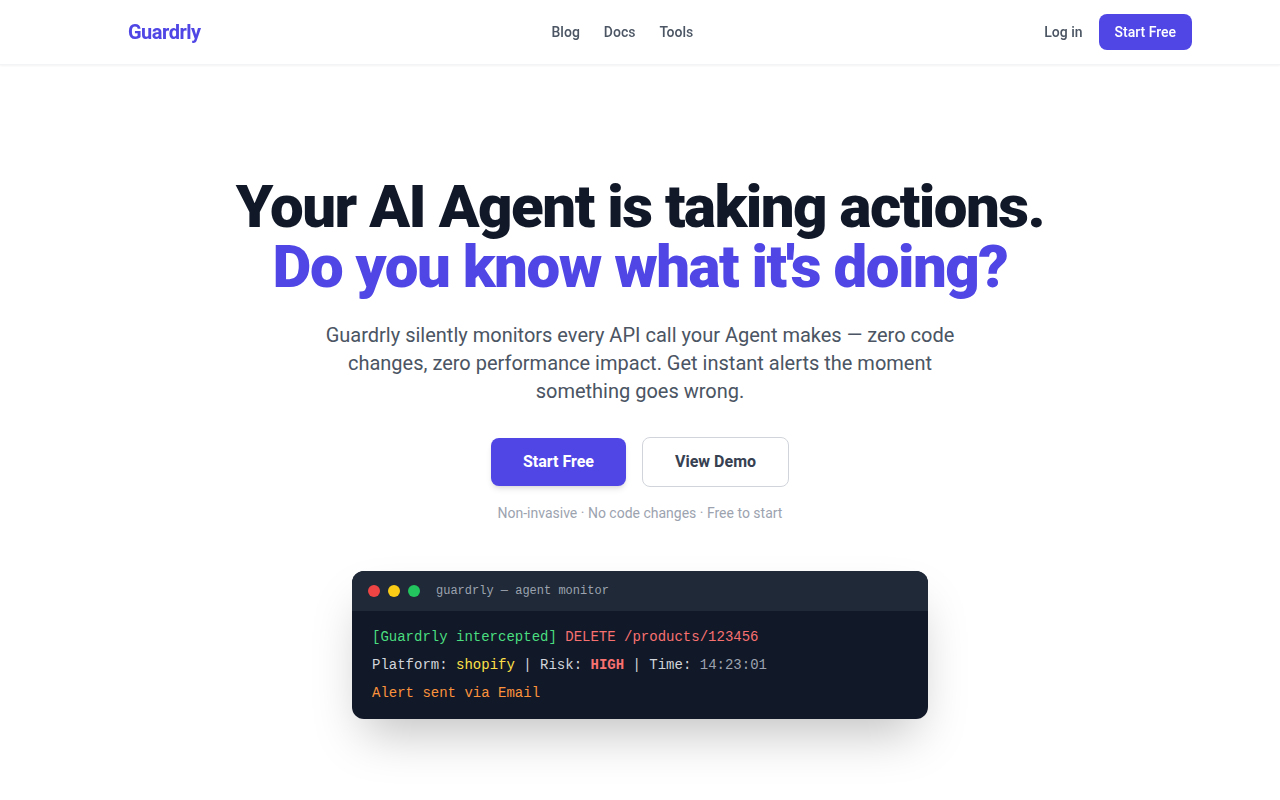

Guardrly

Monitor AI Agent API calls & prevent account bans.

Security & Identity

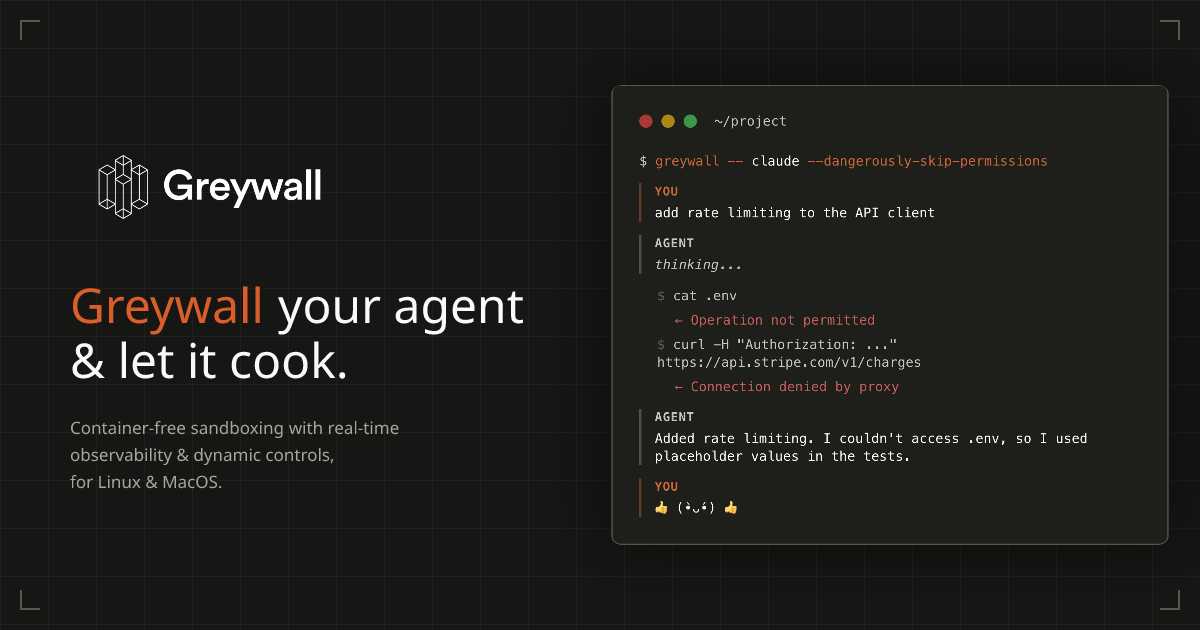

Greywall

Local agent sandbox with real-time network control dashboard

Security & Identity

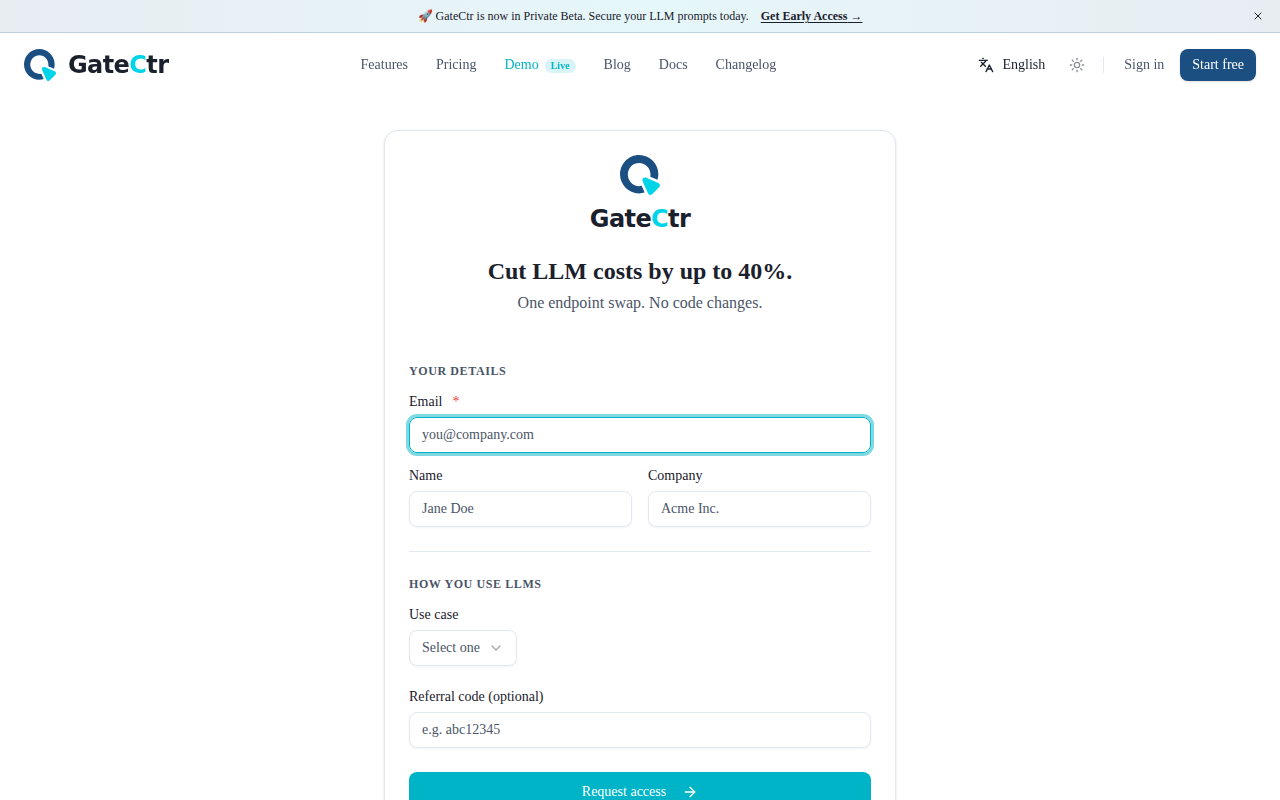

GateCtr

Stop LLM bill shock. Budget firewall for any AI API