GLM-5V-Turbo

Vision-to-code foundation model for real GUI automation

The model processes visual and textual inputs—including images, video, and files—to generate code that automates graphical user interfaces. It is designed for workflows that require understanding a screen layout, planning a sequence of actions, and executing those actions, enabling a “see‑and‑code” loop for real GUI automation tasks.

Target users are developers and engineers building agents that need to interpret visual environments and produce corresponding code, such as those integrating with Claude Code, OpenClaw, or similar automation frameworks. The system supports long context windows (up to 200 K tokens) and can output large code blocks (up to 128 K tokens), facilitating complex, multi‑step programming tasks.

Distinctive features include multiple thinking modes for varied scenarios, real‑time streaming of responses, built‑in function‑calling to invoke external tools, and a context‑caching mechanism that improves efficiency during extended interactions. The model is positioned as a multimodal coding foundation optimized for agent‑centric pipelines.

Reviews

Loading reviews…

Similar apps

AI Coding Agents

MolmoWeb

Open web agents from data to deployment

AI Coding Agents

Kimi K2.6

Open-source SOTA for long-horizon coding and agent swarms

AI Coding Agents

OpenOwl

Automate what APIs can't in one prompt done locally

AI Agents & Automation

Agent TARS

Multimodal AI agent for GUI interaction

AI Agents & Automation

ZooClaw

Your proactive team of AI specialists in one place

AI Coding Agents

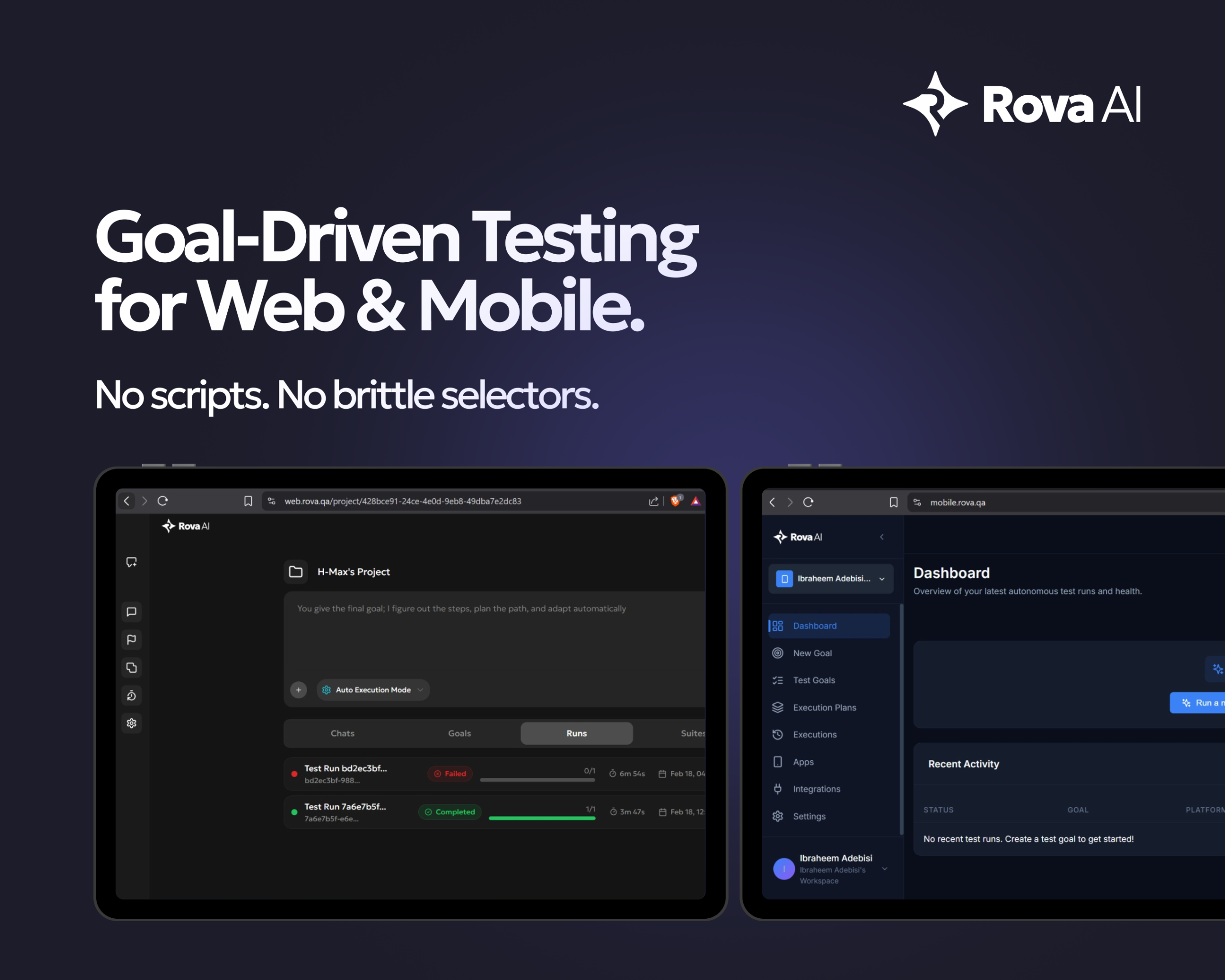

Rova AI

Autonomous, goal-driven testing for web & mobile apps