Tracium

Tracium is an AI Evaluation Platform for testing and benchmarking AI model performance.

Tracium provides a developer‑first observability layer for AI systems, allowing users to instrument their code with a single line import import and begin tracing AI agents immediately. It records detailed information about each request, including token usage, latency, tool hops, and model invocations, and aggregates this data to show costs, errors, and performance metrics in real time.

The platform supports per‑tenant analytics, enabling teams to slice usage and outcomes by customer, workspace, or environment, and offers A/B testing of prompts, models, and routing strategies. It also includes drift detection to surface shifts in inputs or outputs before degradation occurs, and it can replay failures for debugging.

Targeted at developers building and scaling AI applications, Tracium aims to simplify monitoring, debugging, and optimization without extensive setup, and it offers tiered pricing that scales from a free tier for early experimentation to higher‑capacity plans for production workloads.

Reviews

Loading reviews…

Similar apps

AI Coding Agents

ClawTrace

Make your OpenClaw better, cheaper, and faster

Budgeting & Personal Finance

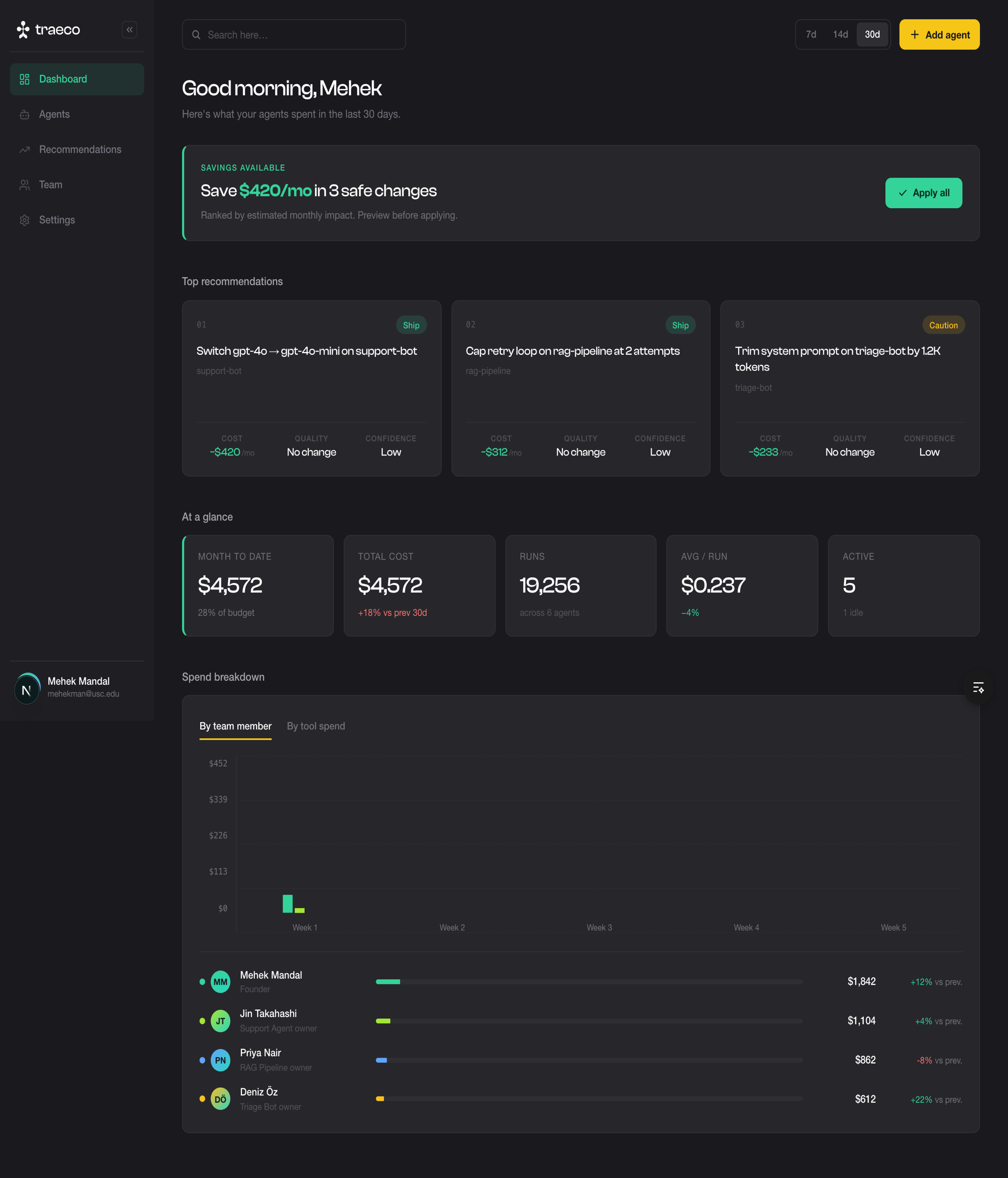

Traeco

Cost Optimization for AI Agents

AI Coding Agents

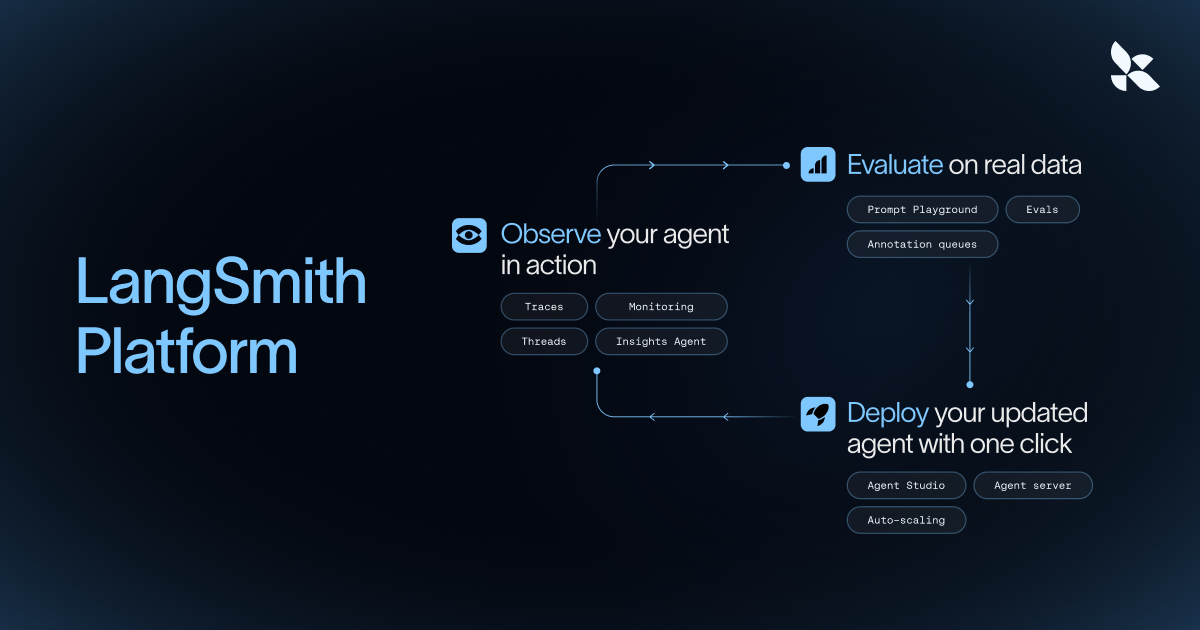

Langsmith

Observability platform for LLM applications, tracking prompts, latency, and costs.

AI Coding Agents

Langfuse

LLM engineering platform for model tracing, prompt management, and application evaluation. Langfuse helps teams collaboratively debug…

AI Coding Agents

QuickCompare by Trismik

Compare LLMs on your data, measure, and pick the best.

AI Coding Agents

Opik

Evaluate, test, and ship LLM applications with a suite of observability tools to calibrate language model outputs across your dev and…