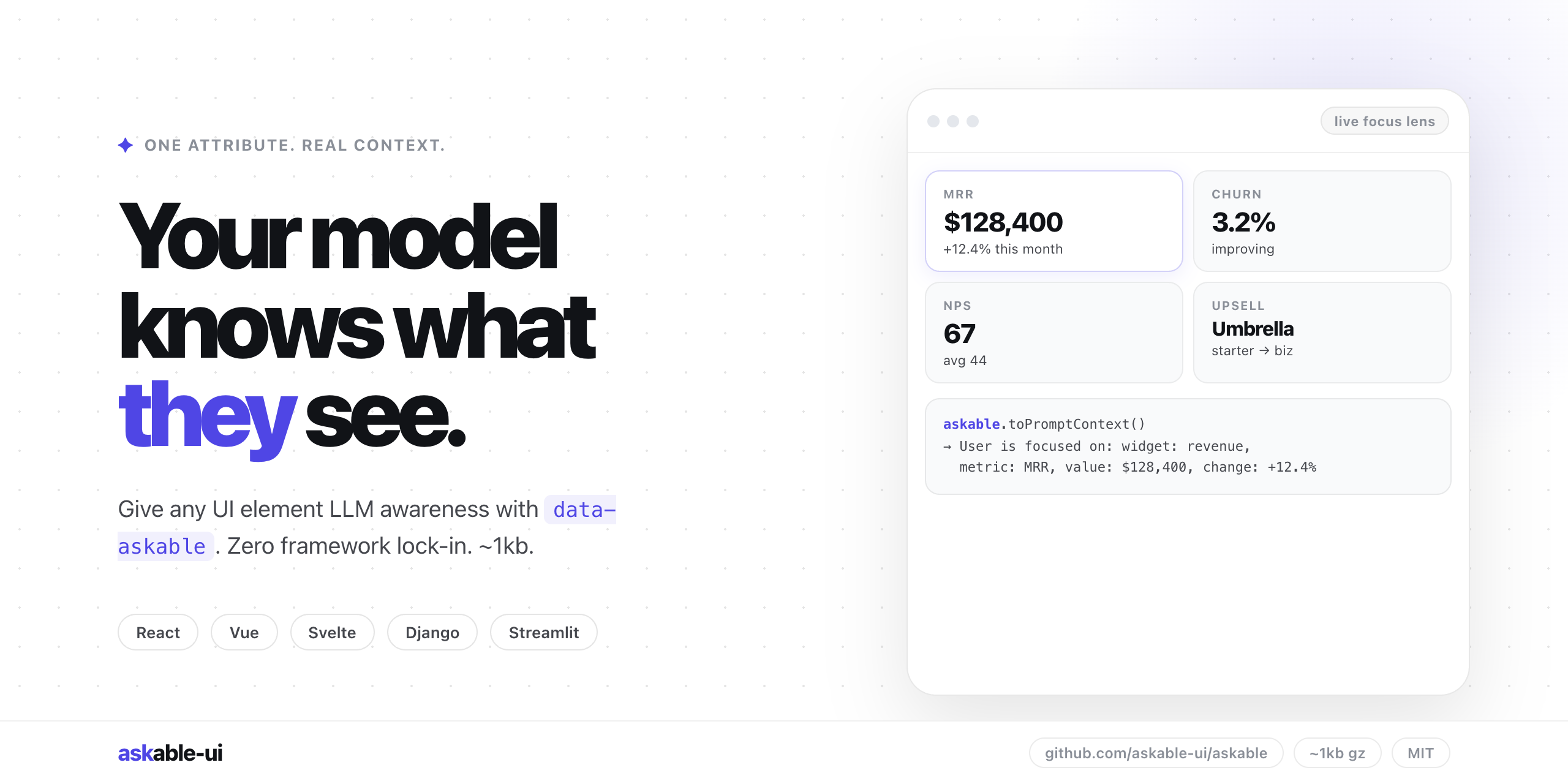

askable

Two lines of code to give your LLM eyes.

It lets a language‑model receive the exact UI element a user is looking at by adding a single `data‑askable` attribute to any meaningful DOM node. When the element gains focus—via hover, click, or other interaction—the library captures the attached JSON metadata and makes it available through `askable.toPromptContext()`. This context is then injected at the AI boundary, so the model works with the user’s visual focus instead of the whole page.

The tool is framework‑agnostic and provides lightweight adapters for React, Vue, Svelte, and plain HTML, allowing developers to annotate existing components without rewriting them. Because the metadata is already part of the UI rendering logic, no extra data pipelines, prompt templates, or mapping layers are required. It is suited for dashboards, forms, tables, and other interactive interfaces where the assistant should respond to the element the user is inspecting.

Its distinctive approach is to tie the model’s input directly to the user’s attention, delivering a concise JSON payload that reflects the currently focused element. This reduces the need for full‑page serialization and enables real‑time, context‑aware AI interactions across any front‑end stack.

Reviews

Loading reviews…

Similar apps

AI Coding Agents

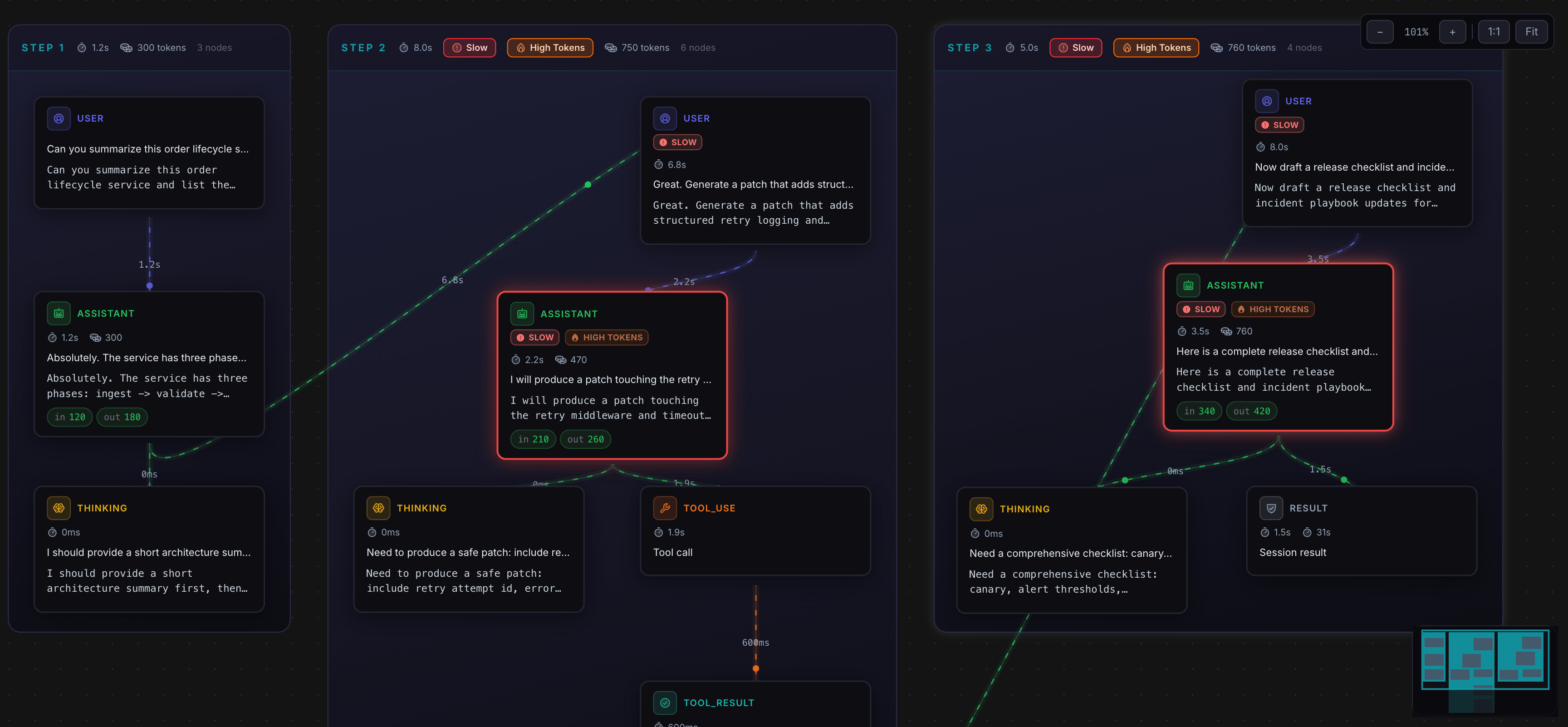

AgenticLens

Visual debugging, tracing, and replay for agent workflows

AI Coding Agents

Flowlines

Flowlines detects how your agents behave across sessions and surfaces the exact memory fix that prevents the next failure. Evidence-backed…

AI Coding Agents

uiprompt.app

Build beautiful UI, prompt by prompt

AI Coding Agents

Ask The AI

Find out if AI recommends you. Then fix it.

AI Coding Agents

Arize Phoenix

Open-source platform for LLM tracing, evaluation, and optimization. Features automatic instrumentation, prompt playground, and real-time AI…

AI Coding Agents

OpenPUI

We built UI for humans. Then agents. Now pets.