crawley

Unix-way web crawler.

The tool crawls web pages and outputs every link it discovers, streaming unique URLs to standard output while stripping fragments. It parses HTML with a fast SAX parser, extracts URLs from JavaScript and CSS using lexical parsers, and can retrieve API endpoints, image, video, audio, and form resources. Users can control crawl depth, include or exclude specific HTML tags, ignore URLs matching patterns, and follow subdomains, with optional politeness via robots.txt handling and request delays.

It operates as a command‑line application written in Go, supporting environment‑based proxy configuration, custom cookies and headers in curl‑compatible syntax, and a “brute” mode that also scans HTML comments. Additional modes include directory‑only traversal, headless operation that suppresses HEAD requests, and tag‑based filtering for targeted scans. The binary is available for Linux, FreeBSD, macOS, and Windows, with package options for Debian/Red Hat and Arch Linux.

The codebase is under 1500 source lines, idiomatic Go, and more than 80 % test coverage, providing a lightweight yet feature‑rich solution for developers needing to enumerate links, resources, or endpoints from a site without requiring a full browser engine.

Reviews

Loading reviews…

Similar apps

API & Network Testing

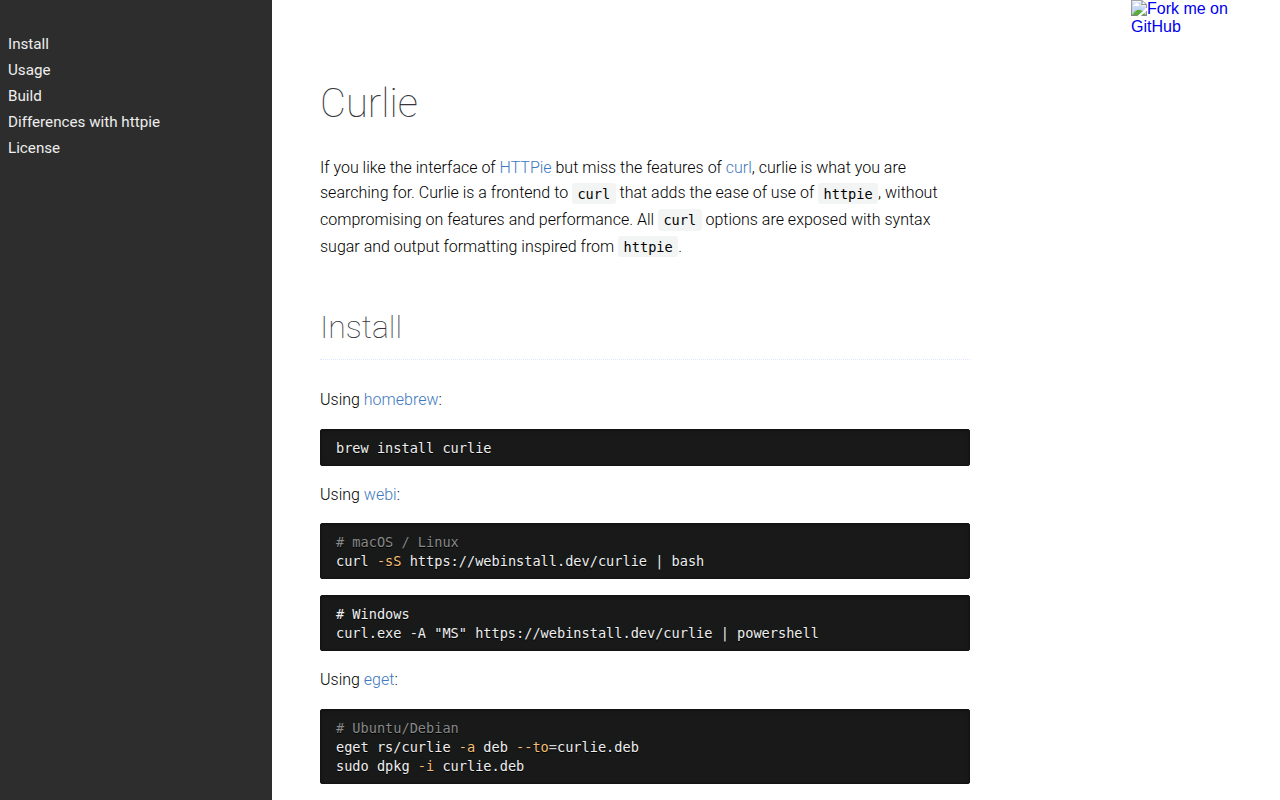

curlie

A curl frontend with the ease of use of HTTPie.

API & Network Testing

tunnelmole

tunnelmole - listed on awesome-cli-apps.

API & Network Testing

shell2http

Shell script based HTTP server.

API & Network Testing

Lura

High-performance API Gateway.

API & Network Testing

ATAC

A feature-full TUI API client made in Rust.

Network & Connectivity

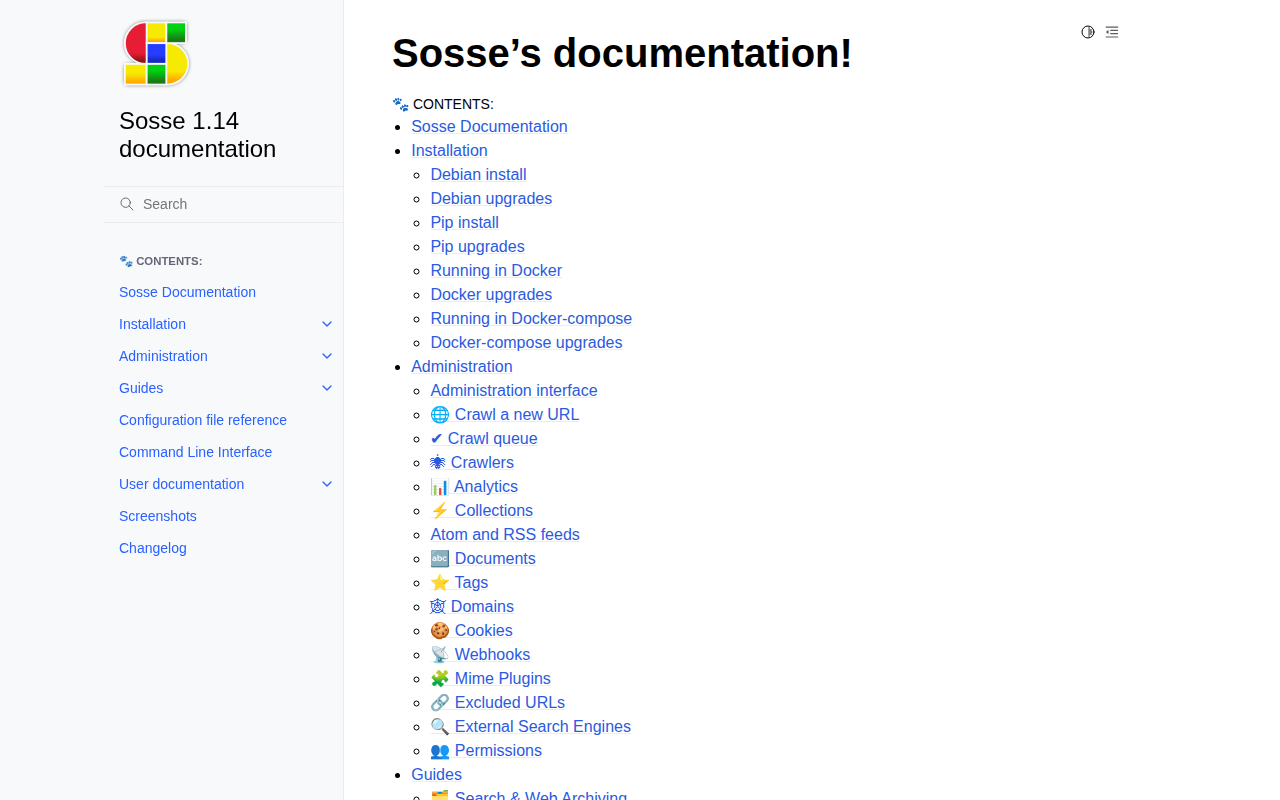

Sosse

Selenium based search engine and crawler with offline archiving.