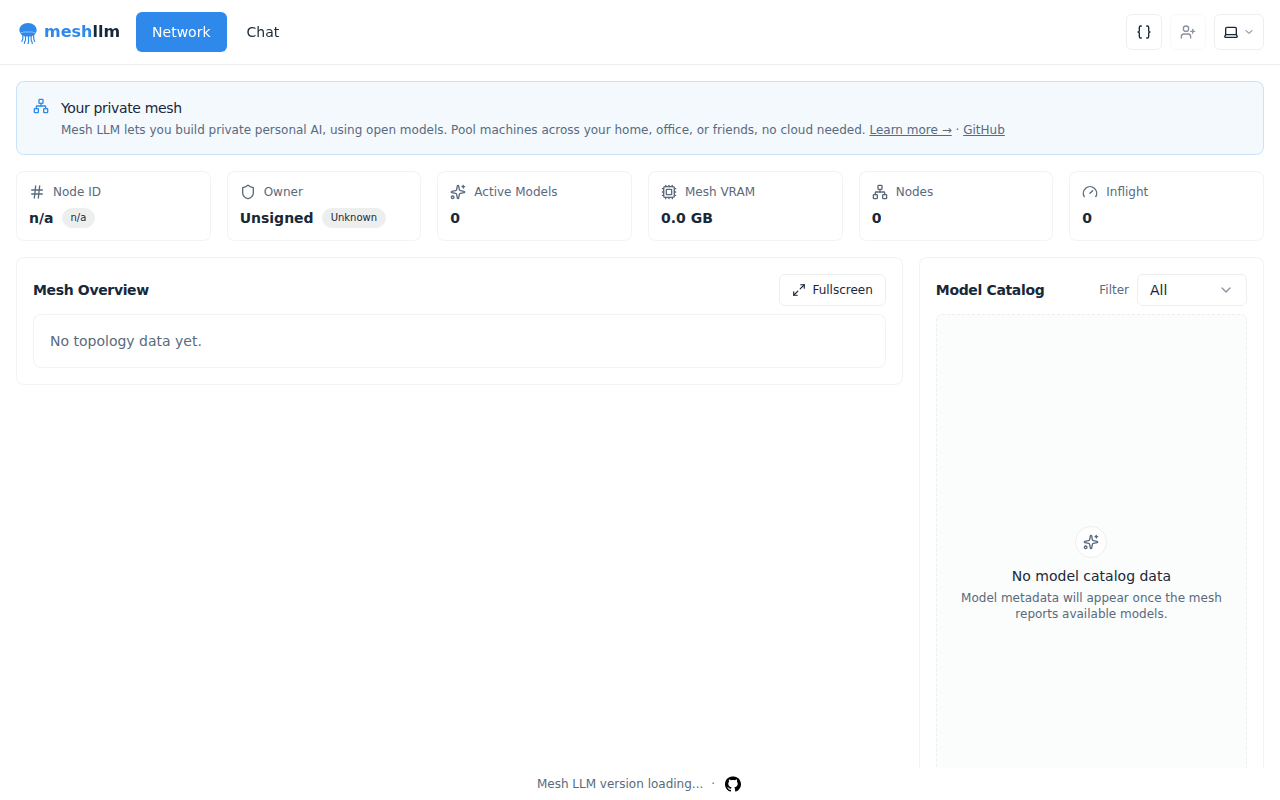

Mesh LLM

Pool compute to run powerful open models

Mesh LLM provides a framework for aggregating computational resources to execute large open‑source language models. It enables developers to combine multiple machines or processing units into a unified pool, allowing the models to run with higher throughput or on hardware that would otherwise be insufficient for a single instance. The system is designed for experimental use, targeting users who need to experiment with scaling open models without relying on proprietary services. Its distinctive approach lies in the explicit focus on pooling compute across distributed environments, offering a way to harness collective processing power for demanding language‑model workloads.

Reviews

Loading reviews…

Similar apps

DevOps & Infrastructure

Coithub

Torrent Like Protocol for Deployment LLM Models Ready To Use

DevOps & Infrastructure

LLMKube

Kubernetes operator for llama.cpp-native LLM inference with GPU scheduling, Apple Silicon Metal support, and OpenAI-compatible API.

DevOps & Infrastructure

DeployStack

Open-source, self-hosted alternative to Vercel and Render

DevOps & Infrastructure

Bacalhau

Bacalhau is an open-source platform for fast, cost-effective, and secure distributed computing, seamlessly integrating with Docker and…

DevOps & Infrastructure

OpenBerth

AI-assistant native self-hosted deployment platform

AI Coding Agents

Hyperspace

Decentralised peer to peer network for agents