LLMKube

Kubernetes operator for llama.cpp-native LLM inference with GPU scheduling, Apple Silicon Metal support, and OpenAI-compatible API.

LLMKube provides a Kubernetes operator that automates the deployment of LLM inference services built on llama.cpp and compatible runtimes. Users define a Model and an InferenceService in YAML; the operator handles downloading, caching, health checks, scaling, and GPU scheduling across nodes, while exposing an OpenAI‑compatible HTTP API that works with existing SDKs and libraries such as LangChain.

The operator supports both NVIDIA CUDA GPUs and Apple Silicon Metal devices via a dedicated Metal Agent, allowing mixed‑hardware clusters to serve models without custom scripting. A CLI and Helm chart simplify installation on any Kubernetes cluster, and resources can be allocated with simple flags for CPU, memory, and GPU count. The project is open source under Apache‑2.0, self‑hostable, and free of subscription requirements.

Target users are teams that need to run private or cost‑controlled LLM workloads on their own infrastructure, including air‑gapped or multi‑GPU environments. LLMKube removes the need for manual Docker Compose setups, providing a declarative, Kubernetes‑native workflow for model versioning, scaling, and API exposure.

Reviews

Loading reviews…

Similar apps

DevOps & Infrastructure

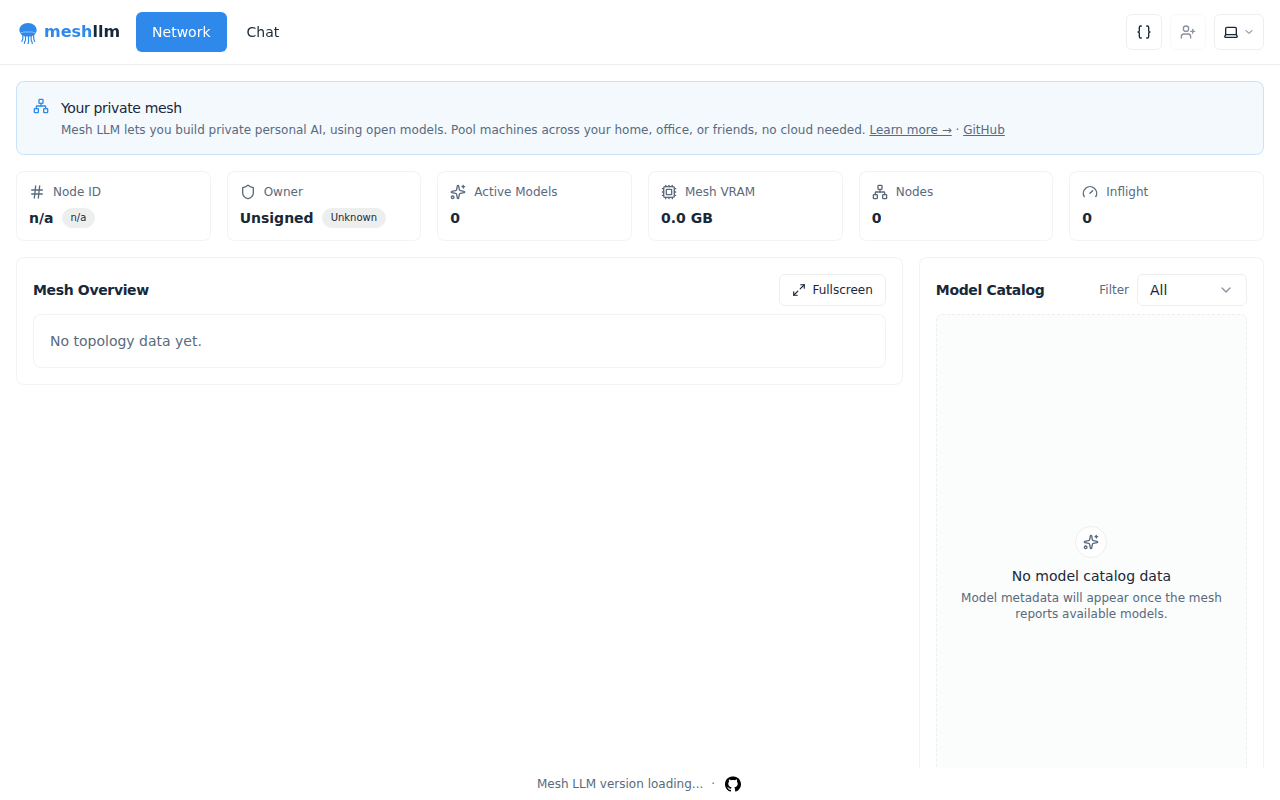

Mesh LLM

Pool compute to run powerful open models

AI Coding Agents

LocalAI

Run your AI models locally and generate images and audio (alternative to OpenAI and Claude).

DevOps & Infrastructure

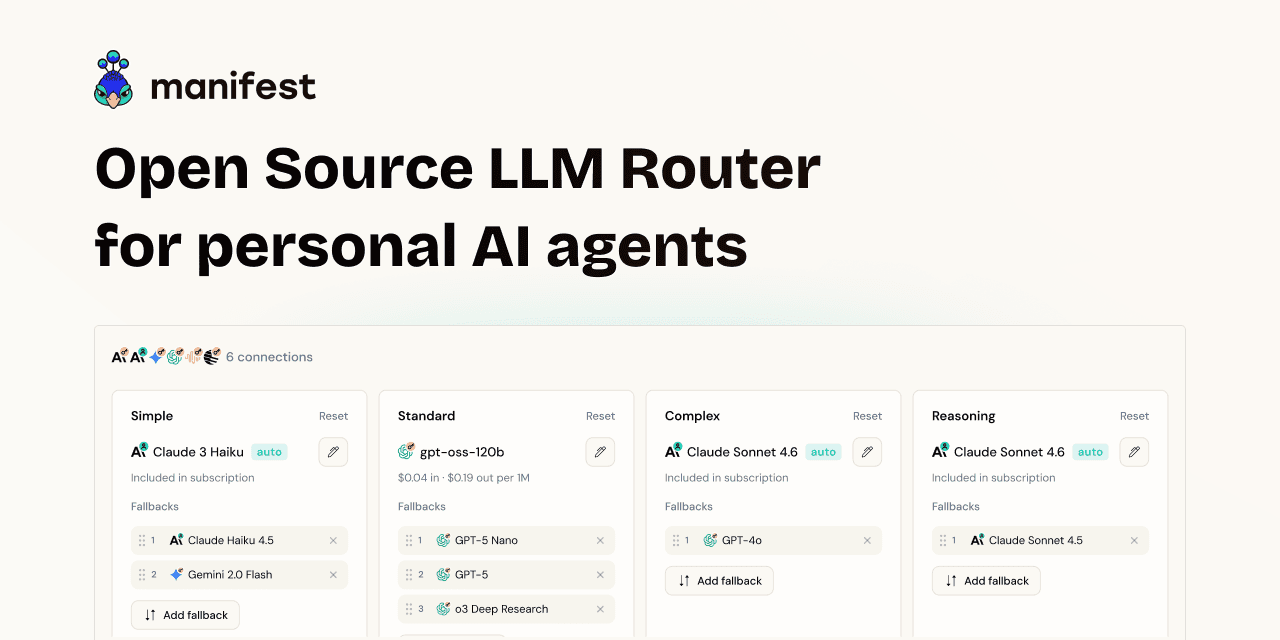

Manifest

Complete backend that fits into 1 YAML file.

DevOps & Infrastructure

Kubernetes

Open-source system for automating deployment, scaling, and management of containerized applications.

Window & Desktop Management

LlamaBarn

Menu bar app for running local LLMs

DevOps & Infrastructure

OpenBerth

AI-assistant native self-hosted deployment platform