Mistral Medium 3.5

A 128B model for coding, reasoning, and long tasks

Mistral Medium 3.5 is a 128‑billion‑parameter dense model that combines instruction following, reasoning, and code generation within a single architecture. It offers a 256 k token context window and configurable reasoning effort, allowing it to handle both brief conversational replies and extended, multi‑step computations. The model is released with open weights under a modified MIT license and can be self‑hosted on as few as four GPUs.

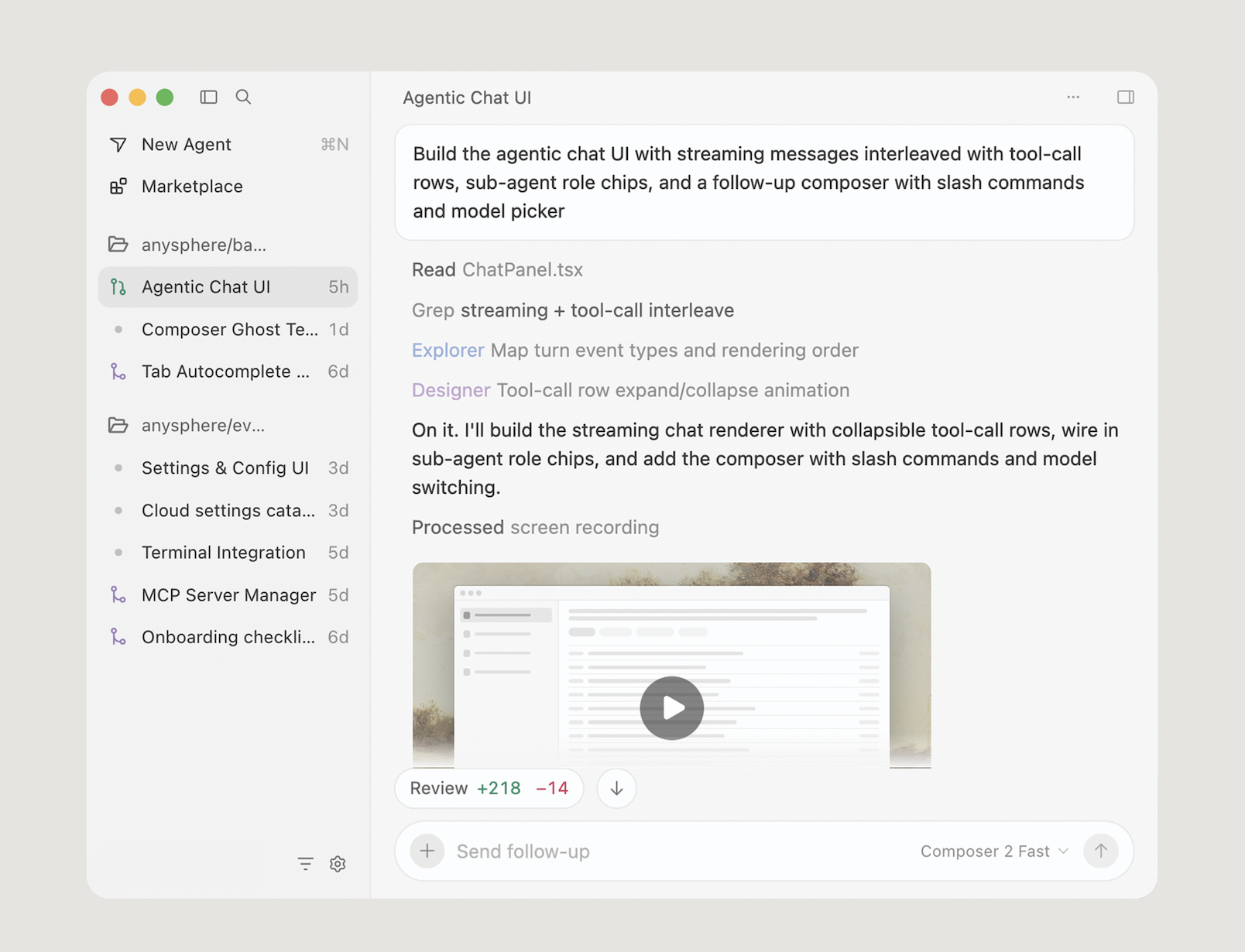

The model powers remote coding agents in the Vibe platform, where tasks are executed in the cloud and can be launched from a command‑line interface or directly within the Le Chat interface. These agents run asynchronously, persisting after the user steps away, and notify the user upon completion. A preview “Work mode” in Le Chat extends the capability to complex workflows, orchestrating parallel tool calls for research, analysis, and cross‑tool actions.

Mistral Medium 3.5 targets developers and teams that need long‑running, high‑capacity AI assistance for coding and productivity tasks, especially those building agentic systems that require sustained reasoning and multi‑modal inputs. Its open‑weight availability and modest hardware requirements make it suitable for self‑hosted deployments in experimental and production environments.

Reviews

Loading reviews…

Similar apps

AI Coding Agents

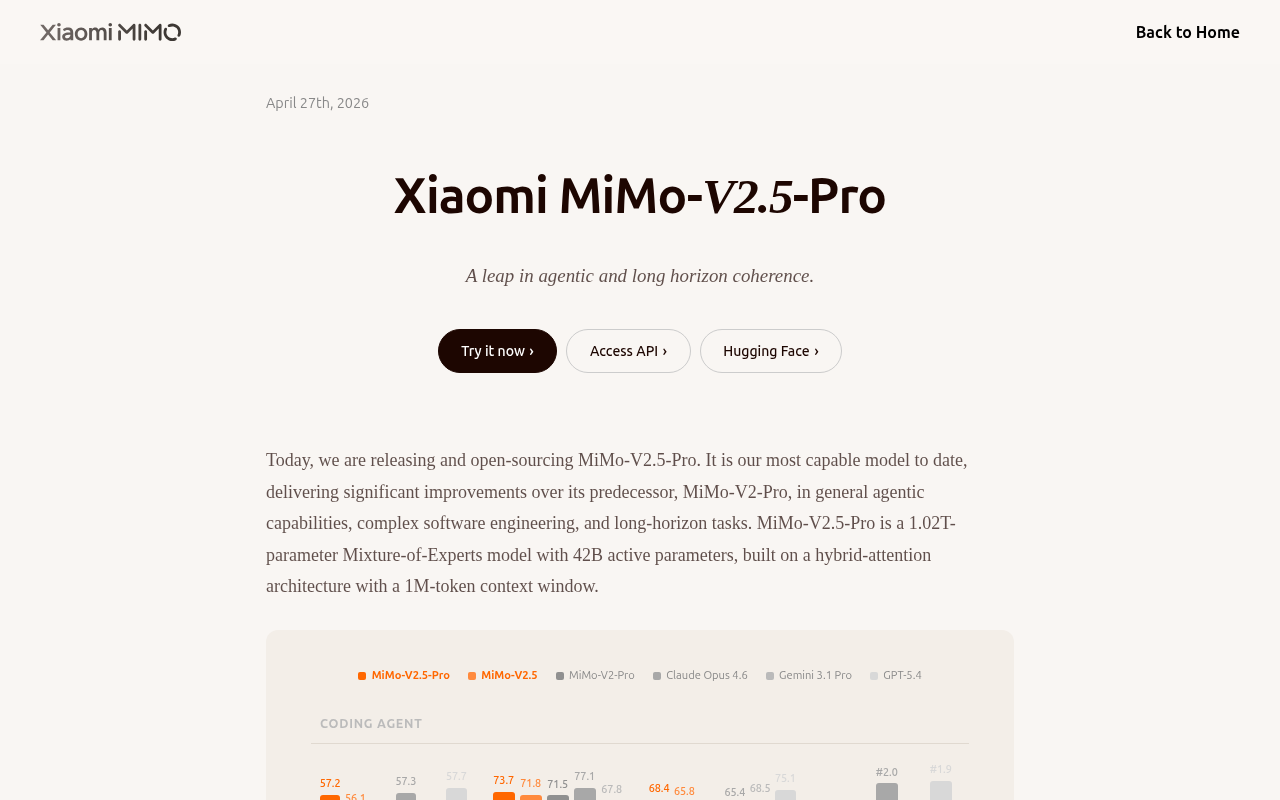

MiMo-V2.5 & Pro

Frontier agent capability with better token efficiency

AI Coding Agents

Kimi K2.6

Open-source SOTA for long-horizon coding and agent swarms

AI Coding Agents

BridgeSpace 3

The agent development environment to ship faster

AI Coding Agents

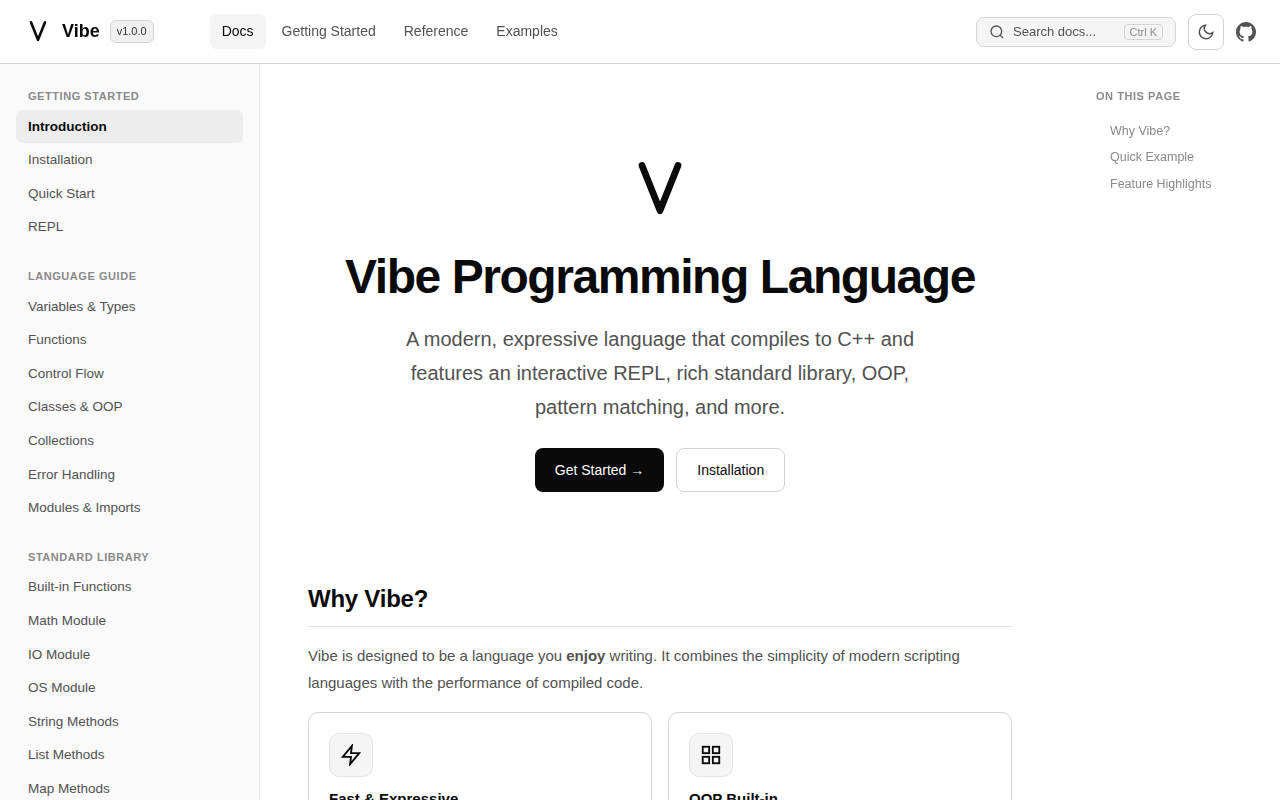

Vibe

Code simpler. Build faster. Meet Vibe.

AI Coding Agents

Trinity-Large-Thinking by Arcee

The first open model as performant as Opus 4.6, 96% cheaper

AI Coding Agents

Cursor 3

Unified workspace for parallel local/cloud agents and MCPs