MiMo-V2.5 & Pro

Frontier agent capability with better token efficiency

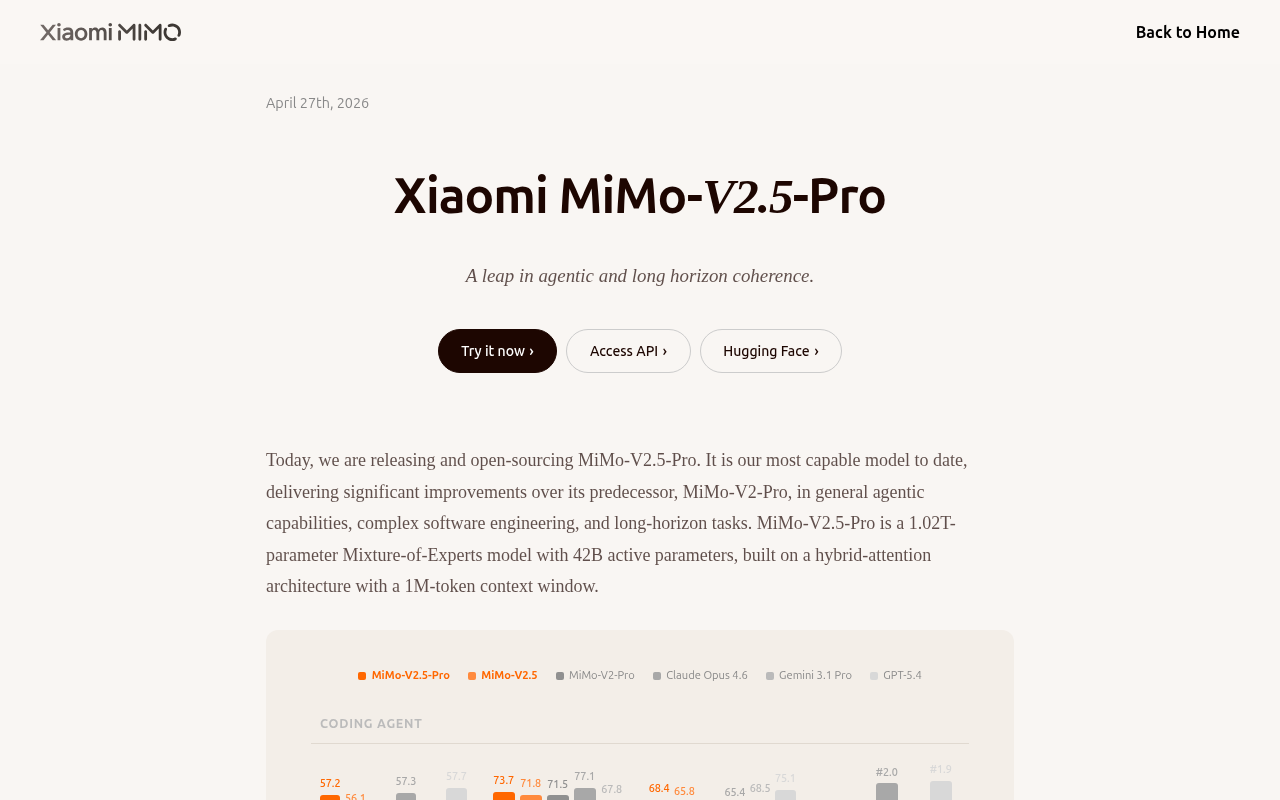

The model is a 1.02‑trillion‑parameter Mixture‑of‑Experts system with 42 billion active parameters and a hybrid‑attention architecture that supports a one‑million‑token context window. It is designed for frontier agent capabilities, emphasizing token efficiency and the ability to follow complex instructions over ultra‑long contexts. In internal benchmarks it sustains tasks requiring more than a thousand tool calls while maintaining coherence and adherence to subtle requirements embedded in the prompt.

Typical users include researchers and developers who need an autonomous agent for demanding software‑engineering problems. The model has demonstrated the ability to construct a full SysY compiler in Rust—from lexer and parser to RISC‑V backend and performance optimization—within a few hours and thousands of tool calls, achieving perfect test‑suite scores. It can also be prompted to create other sophisticated artifacts such as a full‑featured video editor.

What distinguishes this release is its focus on long‑horizon, self‑correcting workflows that combine many sequential operations without losing consistency. The open‑source release is available through the same API and platform endpoints as prior versions, requiring only a model‑tag change to access the newer capabilities.

Reviews

Loading reviews…

Similar apps

AI Coding Agents

MiMo-V2.5 Voice

Bilingual ASR for dialects, code-switching, and songs

AI Coding Agents

Kimi K2.6

Open-source SOTA for long-horizon coding and agent swarms

AI Coding Agents

Mistral Medium 3.5

A 128B model for coding, reasoning, and long tasks

AI Coding Agents

MolmoWeb

Open web agents from data to deployment

AI Coding Agents

DeepSeek-V4

The open-source era of 1M context intelligence

AI Coding Agents

GLM-5V-Turbo

Vision-to-code foundation model for real GUI automation