Wafer Pass

Flat rate to the best LLMs for OpenClaw, Hermes Agent, etc.

The platform provides autonomous agents that profile, diagnose, and optimize GPU inference across the full stack, from low‑level kernels to model execution and production pipelines. By continuously analyzing workload characteristics, the agents adjust configurations to achieve higher throughput and lower latency, delivering what the service claims to be the fastest open‑source LLM inference on any hardware.

Users can subscribe to a flat‑rate plan that grants access to a catalog of frontier open‑source models such as Qwen3.5‑397B‑Turbo, GLM5.1‑Turbo, and DeepSeekV4‑Pro‑Turbo. The subscription includes a defined number of requests per five‑hour window and offers options for private workloads with zero data retention. Pricing tiers are presented for solo developers and enterprise customers, with the ability to cancel at any time.

The service emphasizes rapid deployment, promising custom inference optimization for bespoke models within a day. It is positioned as an enterprise‑focused solution that combines performance‑focused AI agents with a subscription model for continuous access to the latest high‑throughput LLMs.

Reviews

Loading reviews…

Similar apps

DevOps & Infrastructure

OpenInfer

Keep your OpenClaw agents running. Free beta, no code change

AI Agents & Automation

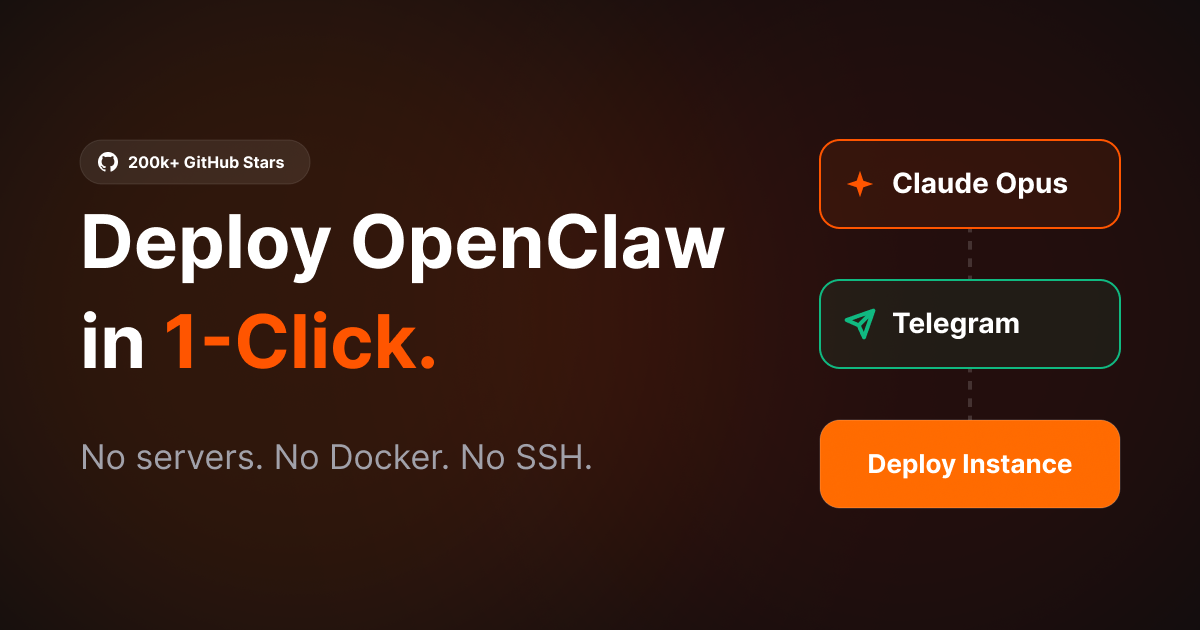

PrimeClaws - OpenClaw and Hermes VPS Hosting 24/7

PrimeClaws — managed OpenClaw VPS hosting, 24/7. GPT-5.4, Kimi K2.5, Deepseek V3.2 included free. No DIY setup. Your agent live in 60…

AI Chat & Voice Agents

LunoGen - Ai Agent on WhatsApp

OpenClaw Alternative - Faster, Secure & 3× Less Token Usage

AI Coding Agents

OpenRouter

Provides a unified API to access multiple large language models from various providers.

AI Coding Agents

Otter Code

Code on your terms. Local AI coding agent.

Network & Connectivity

ClawRapid

OpenClaw secured in less than a minute