Calcis

Know what your LLM calls cost before you make them.

Calcis provides pre‑flight cost estimates for calls to large language model APIs, allowing users to see the exact price of a request before it is sent. It supports models from OpenAI, Anthropic, and Google, tracking more than twenty‑eight models and updating pricing within hours of any change. The service offers a price ledger, a REST API, a CLI, and a GitHub Action, giving engineers a reliable source of truth for LLM pricing that can be integrated into development workflows.

The tool is aimed at engineers and teams that need to manage AI spend and avoid unexpected charges. By using the same tokenizers as each provider, Calcis can calculate per‑call costs based on input and output token counts, presenting the data in a searchable index and live comparison view. It logs price changes, timestamps updates, and verifies the information to maintain accuracy.

Calcis is experimental software, maintained as an open‑source project. It focuses on transparency and predictability of LLM usage costs rather than post‑mortem billing analysis, and it can be accessed via command‑line, API, or CI/CD integration.

Reviews

Loading reviews…

Similar apps

API & Network Testing

Octopus AI Model Intel

Real-time pricing API for GPT-4o, Claude, Gemini & 20+models

DevOps & Infrastructure

LLM Ops Toolkit by Lamatic.ai

Aggregate uptime monitoring across OpenAI, Claude, and more

AI Coding Agents

KostAI

Cut LLM spend by up to 92 percent with governed routing

System Monitoring & Maintenance

AgenSights

Know exactly which AI agent is burning your budget.

Network & Connectivity

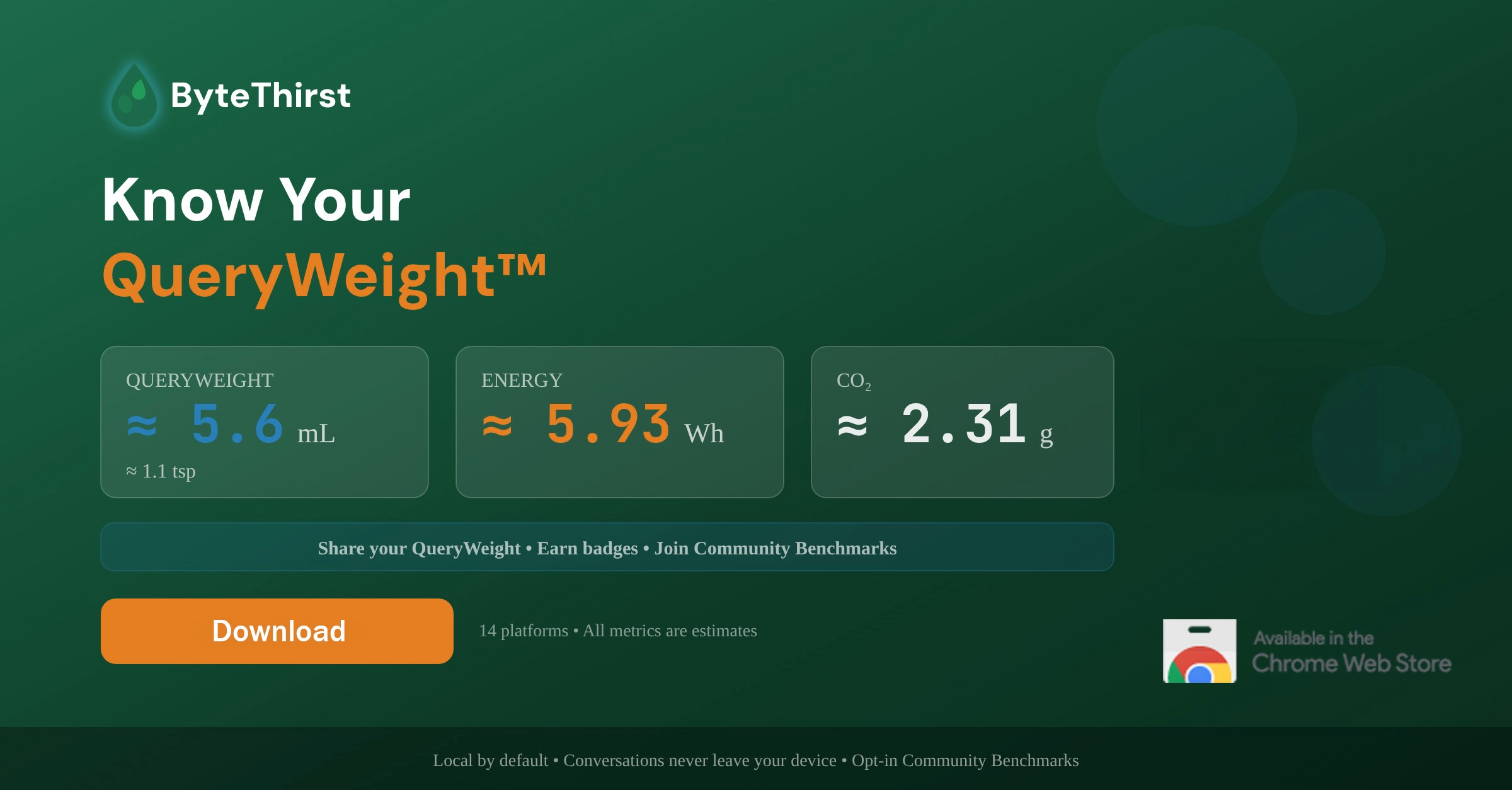

ByteThirst

Know the water, energy & CO₂ cost of every AI prompt

API & Network Testing

Recost

Your API costs fully visible.