Manifest

Complete backend that fits into 1 YAML file.

Manifest is a routing layer for large‑language‑model queries that selects the most cost‑effective provider for each request. It evaluates a request’s complexity and specificity in under two milliseconds, then forwards it to a suitable model from a pool that includes over 300 models across 16 major providers as well as any OpenAI‑compatible custom endpoint. By consolidating routing behind a single `/auto` endpoint, it lets agents and applications avoid using oversized models for simple tasks, potentially reducing token expenses by up to 70 %.

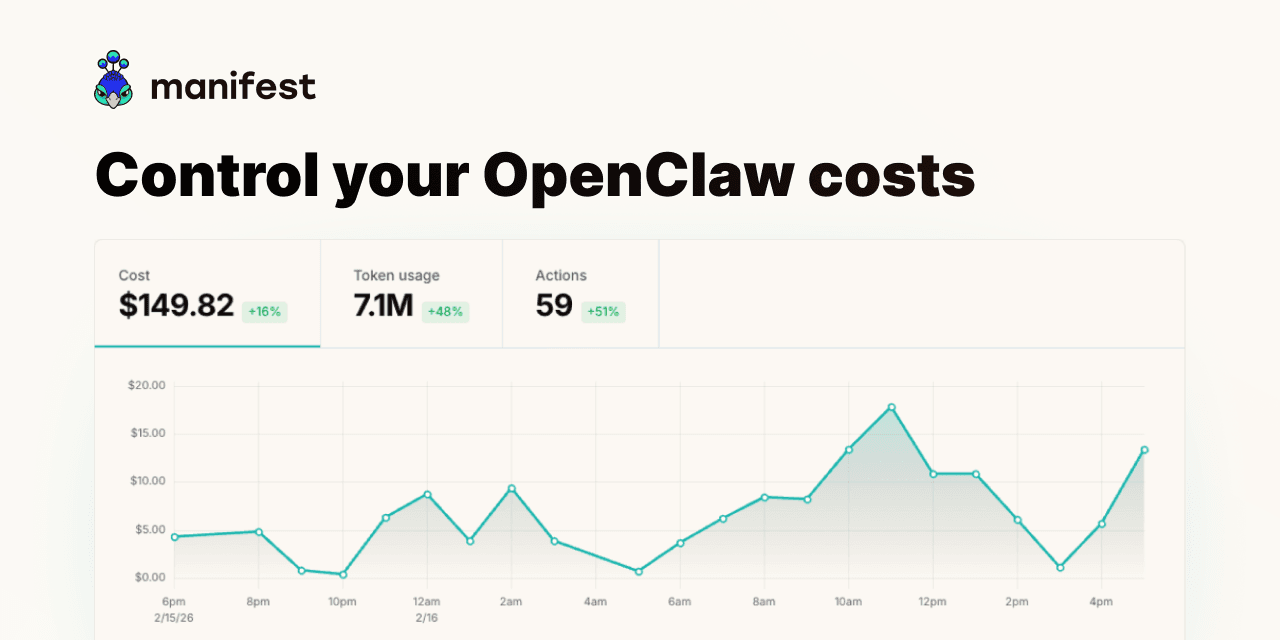

The system is self‑hostable via a Docker image and can also be run as a managed cloud service. Users configure API keys, subscriptions, or local model instances, and Manifest tracks per‑message token counts, costs, and cache usage. It supports budgets, rate‑limit alerts, and email notifications when spending thresholds are reached, as well as automatic fallback to alternative models if a primary provider fails or exceeds its quota.

Designed for developers building autonomous AI agents—such as those using LangChain or personal assistants—Manifest offers drop‑in compatibility with the OpenAI API, allowing existing code to route through it without modification. The open‑source MIT‑licensed project provides transparent metadata logging while keeping prompts and responses private, and it includes a web UI for monitoring usage and managing routing tiers.

Reviews

Loading reviews…

Similar apps

AI Coding Agents

KostAI

Cut LLM spend by up to 92 percent with governed routing

AI Coding Agents

Langfuse

LLM engineering platform for model tracing, prompt management, and application evaluation. Langfuse helps teams collaboratively debug…

DevOps & Infrastructure

ZenMux

The Enterprise LLM Platform. Get a Unified API for all models, intelligent routing, and AI Model Insurance to eliminate hallucination risk.

DevOps & Infrastructure

Appwrite

End to end backend server for web, native, and mobile developers 🚀.

DevOps & Infrastructure

LLMKube

Kubernetes operator for llama.cpp-native LLM inference with GPU scheduling, Apple Silicon Metal support, and OpenAI-compatible API.

AI Coding Agents

CodeRouter

Cut your AI coding bill 70% with automatic task routing